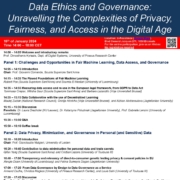

The LeADS project is organising a conference, titled “Data Ethics and Governance: Unravelling the Complexities of Privacy, Fairness, and Access in the Digital Era“, in a hybrid mode at U-Residency (VUB Etterbeek Campus) on Monday, 15th January 2024. For the remote participation, you may join us here.

Below you can find the detailed programme:

14:00 – 14:05 Welcome and introductory remarks

Prof. Dimosthenis Kyriazis, Dept. of Digital Systems, University of Piraeus

Panel 1: Challenges and Opportunities in Fair Machine Learning, Data Access, and Governance

14:05 – 14:15 Introduction

Chair: Prof. Giovanni Comandé, Scuola Superiore Sant’Anna

14:15 – 14:35 The Flawed Foundations of Fair Machine Learning

Robert Poe (Scuola Superiore Sant’Anna) and Soumia El Mestari (University of Luxemburg)

14:35 – 14:55 Measuring data access and re-use in the European legal framework, from GDPR to Data Act

Tommaso Crepax (Scuola Superiore Sant’Anna), Mitisha Gaur (Scuola Superiore Sant’Anna) and Barbara Lazarotto (Vrije Universiteit Brussel)

14:55 – 15:15 Data Collaborative with the use of Decentralized Learning

Maciej Zuziak (Consiglio Nazionale delle Ricerche), Onntje Hinrichs (Vrije Universiteit Brussel), and Aizhan Abdrassulova (Jagiellonian University)

15:15 – 15:35 Discussion

Panelists:

- Dr. Laura Drechsler, KU Leuven

- Dr. Katarzyna Poludniak, Jagiellonian University

- Prof. Gabriele Lenzini, University of Luxemburg

15:35 – 15:50 Q&A

15:50 – 16:10 Coffee break

Panel 2: Data Privacy, Minimization, and Governance in Personal (and Sensitive) Data

16:10 – 16:20 Introduction

Chair: Prof. Gianclaudio Malgieri, University of Leiden

16:20 – 16:40 Contribution to data minimisation for personal data and trade secrets

Qifan Yang (Scuola Superiore Sant’Anna) and Cristian Lepore (University of Toulouse III)

16:40 – 17:00 Transparency and relevancy of direct-to-consumer genetic testing privacy & consent policies in EU

Xengie Doan (University of Luxemburg) and Fatma Sumeyra Dogan (Jagiellonian University)

17:00 – 17:20 From Data Governance by Design to Data Governance as a Service

Armend Duzha (University of Piraeus), Christos Magkos (University of Piraeus), and Louis Sahi (University of Toulouse III)

17:20 – 17:45 Discussion

Panelists:

- Prof. Elwira Macierzyńska-Franaszczyk, Jagiellonian University

- Dr. Arianna Rossi, Scuola Superiore Sant’Anna

- Dr. Afonso Ferreira, Centre National de la Recherche Scientifique

- Prof. Michail Philipakis, University of Pireaus

17:45 – 18:00 Q&A

Participation is free and open to all.

We are looking forward to sharing our research and having fruitful discussions surrounding our project.

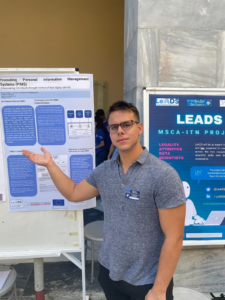

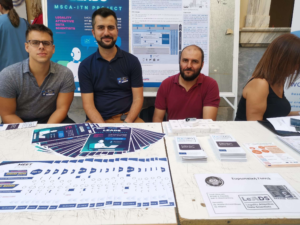

An annual event in which all universities and research centers of the Attica region gathered to present the ongoing activities they are involved in. We were present at the “European Corner”, where the MSCA fellows presented their posters, ESRs addressed questions from not only the audience but also other researchers and students of both universities and schools.

An annual event in which all universities and research centers of the Attica region gathered to present the ongoing activities they are involved in. We were present at the “European Corner”, where the MSCA fellows presented their posters, ESRs addressed questions from not only the audience but also other researchers and students of both universities and schools.